Karpathy Autoresearch: Reality vs. Expectations

Karpathy Autoresearch: Reality vs. Hype in AI Development

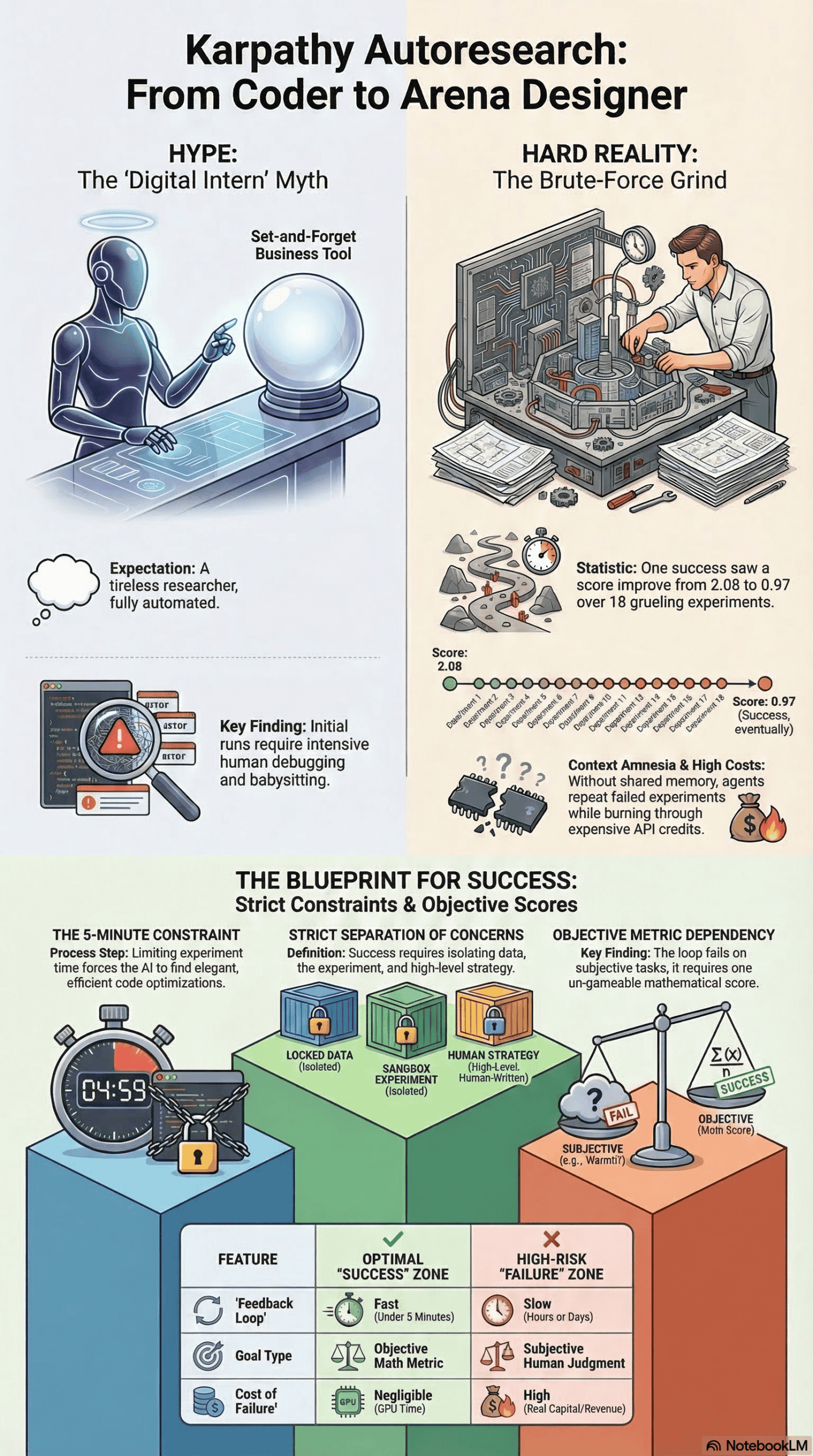

The artificial intelligence industry often gets bogged down by hype around autonomous agents. Developers and business leaders consistently hear that we are on the verge of deploying digital employees capable of running entire companies, managing complex software engineering tasks, and conducting open-ended research while we sleep. However, anyone who has actually tried to string together a complex agent workflow knows that common advice misses the mark. Autonomous agents frequently suffer from context amnesia, loop degradation, and an inability to consistently score their own progress without heavy human intervention.

The conversation shifted dramatically with the release of Andrej Karpathy’s “Autoresearch” repository. Karpathy Autoresearch is a minimalist framework that automates the scientific method for machine learning. It isolates an AI agent in a highly constrained loop where it reads a human-written strategy document, modifies a single training script, runs a strict five-minute experiment, and autonomously decides whether to keep or discard the changes based on an objective score.

This framework quickly drew a lot of attention, leading to claims that this loop could be applied to everything from automated outbound marketing to live financial trading. To separate the reality from the hype, I analyzed dozens of real, documented attempts to implement and adapt the Autoresearch framework across various domains. My role here is to synthesize … to cut through the noise and document what actually happens when humans hand over the keys to an autonomous research loop.

How This Analysis Was Conducted

To understand the practical realities of the Autoresearch framework, I studied a wide array of documented implementations, repository forks, and developer post-mortems. I reviewed real failures and successes shared across technical YouTube channels, coding forums, and X (formerly Twitter) discussions.

I observed patterns across highly technical machine learning engineers attempting to replicate Karpathy’s original nanoGPT tuning, as well as adaptations by developers who pushed the loop into uncharted territories. For example, some developers modified the codebase to optimize cold email copy, generate traditional Irish sheet music, and even execute live arbitrage trades on cryptocurrency prediction markets.

The insights below come from the observed experiences of these developers and researchers. I am extracting the structural patterns, failure points, and breakthrough moments from their combined hundreds of hours of testing. This analysis is grounded entirely in the measurable outcomes and shared logs of those who have actually put the code into production.

Initial Expectations vs. Reality

When beginners first encounter Karpathy Autoresearch, they often feel intensely optimistic. The overarching expectation is that they have just discovered “grad student descent as a service” – a tireless, brilliant digital intern that requires almost zero management.

What People Expect

Common expectations include the belief that the system can be pointed at any generalized business problem to instantly generate value. Because the core repository is simple—consisting of just about 630 lines of Python code – people assume it is endlessly flexible. Beginners expect they can:

- Clone the repository.

- Replace the machine learning training script with a script for generating Facebook ad creatives or writing sales emails.

- Define a vague goal like “increase revenue.”

- Wake up the next morning to a highly profitable business.

Users also expect the AI agent to behave with the same careful reasoning as a human researcher. They assume the agent will remember everything it tried the night before, invent new ways of doing things, and handle any bugs or bottlenecks it encounters. They expect a hands-off, “set it and forget it” magic bullet that operates entirely outside of human constraints.

What Actually Happens in Practice

The reality of running an Autoresearch loop is far messier, more expensive, and significantly more constrained than the initial expectations suggest. When reviewing real attempts to boot up this workflow, I noticed a consistent narrative arc: early excitement, followed by immediate friction, eventually leading to either a frustrating failure or a hard-won, highly specific breakthrough.

In practice, the first several runs almost always require intensive human babysitting. Developers noted that when they first initiated the agent, it frequently proposed code changes that simply failed to compile, crashing the loop entirely. Instead of going to sleep and waking up to a highly optimized model, early adopters found themselves staring at their terminals, manually debugging the agent’s syntax errors or answering its clarifying questions before it could successfully complete a single automated cycle.

Once the loop is finally stable, the agent begins its work. In one documented case, involving the optimization of a small language model for generating sheet music, the agent started with a baseline validation score of 2.08, effectively generating musical gibberish that sounded like a child mashing piano keys, as shown in this analysis video. Over several hours, the agent tested radical changes: it altered the model’s depth, modified the batch sizes, and tweaked the learning rate warmup schedules. In this specific instance, the agent successfully whittled the score down to 0.97 over 18 experiments, eventually producing coherent, original melodies.

However, this progression is rarely linear. I observed patterns where the AI, lacking a proper historical context window, would repeat the exact same failed experiments it had tried days earlier. In other cases, developers ran the loop on maximum settings using advanced models like Codex, only to realize that an hour of autonomous generation had yielded just three successful experiments while burning through massive amounts of API credits. The autonomous progression is real, but it is an expensive, brute-force grind rather than a stroke of creative genius.

Common Failure Points in Autoresearch Implementation

Based on everything I have analyzed, attempts to adapt the Autoresearch framework fail consistently when developers ignore the strict boundaries that make the original repository work. These failures can be broken down into clear categories:

The Absence of an Objective, Frozen Metric

The system absolutely requires a single, unambiguous number to judge success or failure. I reviewed attempts where people tried to use the loop for standard software engineering or outbound marketing without a fast, objective score. You cannot ask an agent to measure subjective concepts like “reputation,” “warmth,” or “code readability.” If the evaluator function is subjective or constantly shifting, all prior experiments are invalidated, and the loop descends into chaos.

Slow Feedback Loops

Karpathy’s original design relies on a strict five-minute time limit for every experiment. I noticed a consistent pattern of failure when developers tried to adapt the loop to tasks that take hours or days to resolve, such as waiting for SEO traffic to update or waiting 72 hours for cold email reply rates. If your experiment takes 60 minutes, you can only run 24 experiments a day, which completely destroys the statistical advantage of running hundreds of rapid, automated iterations.

High Cost of Failure

In a local machine learning sandbox, the cost of a failed iteration is merely five minutes of GPU time. However, when developers attached this loop to real-world assets, the cost of failure skyrocketed. For instance, testing this loop on a live financial trading algorithm means that every failed hypothesis directly drains real capital. AI agents explore aggressively; if the cost of a bad iteration is not practically zero, the system is fundamentally unsafe to run autonomously.

Agent Amnesia and Context Collapse

Without a carefully managed shared memory layer, agents suffer from amnesia. In many failed setups, the loop simply killed the agent after an attempt and restarted a fresh instance with zero memory of what had just failed. As highlighted in this YouTube discussion on agents, if the agent is not strictly forced to read and write to a centralized “learnings” document (like a shared markdown file), it will waste compute cycles retrying discarded hypotheses.

What Consistently Works (Across Many Experiences)

While the failures are plentiful, there are repeatable patterns of success across the experiences I studied. The users who succeed with this primitive respect its inherent constraints and trade-offs.

The Power of the Five-Minute Constraint

What consistently works is using a ruthless, unbending time constraint. By limiting every single training run to exactly five minutes of wall-clock time, the system creates a perfectly level playing field. If an agent decides to make a neural network massively deeper, it must simultaneously find computational efficiencies elsewhere to ensure it still finishes within the five-minute budget. This forces the AI to find efficient solutions rather than bloated, resource-heavy code.

Strict Separation of Concerns

Karpathy’s architecture defines clear boundaries, and the most successful adaptations keep them. The loop works best when divided into three distinct zones:

- A locked preparation file that handles data gathering and is completely off-limits to the AI.

- A highly controlled sandbox file where the AI is permitted to edit code and run logic.

- A human-written markdown file containing the high-level strategy and goals.

When developers maintain this strict separation, the agent has creative freedom within a safe, bounded environment.

Micro-optimizations Over Paradigm Shifts

I observed that the framework consistently thrives at finding micro-optimizations, tuning hyperparameters, adjusting batch sizes, or refining positional encodings, rather than inventing entirely new architectures. The AI excels at exploring the tedious, high-dimensional space of existing code, squeezing out 10% to 20% performance gains. It replaces the grueling, manual grind of scientific testing, but it does not replace the human need for high-level creative direction.

Recommendations for Starting with Autoresearch Today

Based on everything I’ve analyzed across these diverse implementations, if I were advising someone starting today, I would emphasize preparation over execution.

- Shift Your Mindset to “Arena Designer”: The human’s job in this workflow is no longer to write Python; it is to write the system prompts, define the constraints, and design the evaluation metrics. Spend 90% of your time ensuring your objective metric is perfectly calibrated and completely un-gameable, because an AI agent will relentlessly exploit any loophole in your scoring system to artificially inflate its numbers.

- Insist on a “Dry Run” Phase: Before letting the loop run overnight, test the agent on an incredibly small dataset, perhaps just 10,000 tokens, to ensure that the code compiles, the dependencies are correct, and the environment is stable. Only after you have actively babysat the first ten successful iterations would you trust the system enough to run autonomously.

- Strictly Limit the Scope: Do not ask the agent to build a business or optimize a complex, multi-step sales funnel. Point it exclusively at closed-loop, text-based problems where the input and output can be verified locally and instantly, ensuring the cost of failure remains negligible.

Final Takeaway: The Honest Version of Karpathy Autoresearch

The honest truth about Karpathy’s Autoresearch framework is that it offers a significant glimpse into the future of work, but it is not a magical shortcut. It represents a shift from manually typing code to orchestrating automated scientific methods.

Most people get this wrong by assuming the AI is bringing its own judgment to the table. It is not. The AI is simply a tireless engine capable of running thousands of rapid, brute-force iterations. The system is entirely dependent on the human’s ability to define what “winning” looks like through an airtight, objective mathematical score.

If you attempt to apply this loop to subjective tasks, slow-moving environments, or scenarios where failure is expensive, the system will rapidly fail and burn through your resources. However, if you can successfully bound an environment, define a clear metric, and establish a fast feedback loop, you possess the blueprint for a relentless optimization engine. You are no longer the researcher; you become the architect of the laboratory.