Vercel vs Cloudflare: Deployment Wars Analyzed

Vercel vs Cloudflare: The Analyst’s View on Deployment Wars

Why This Matters Now

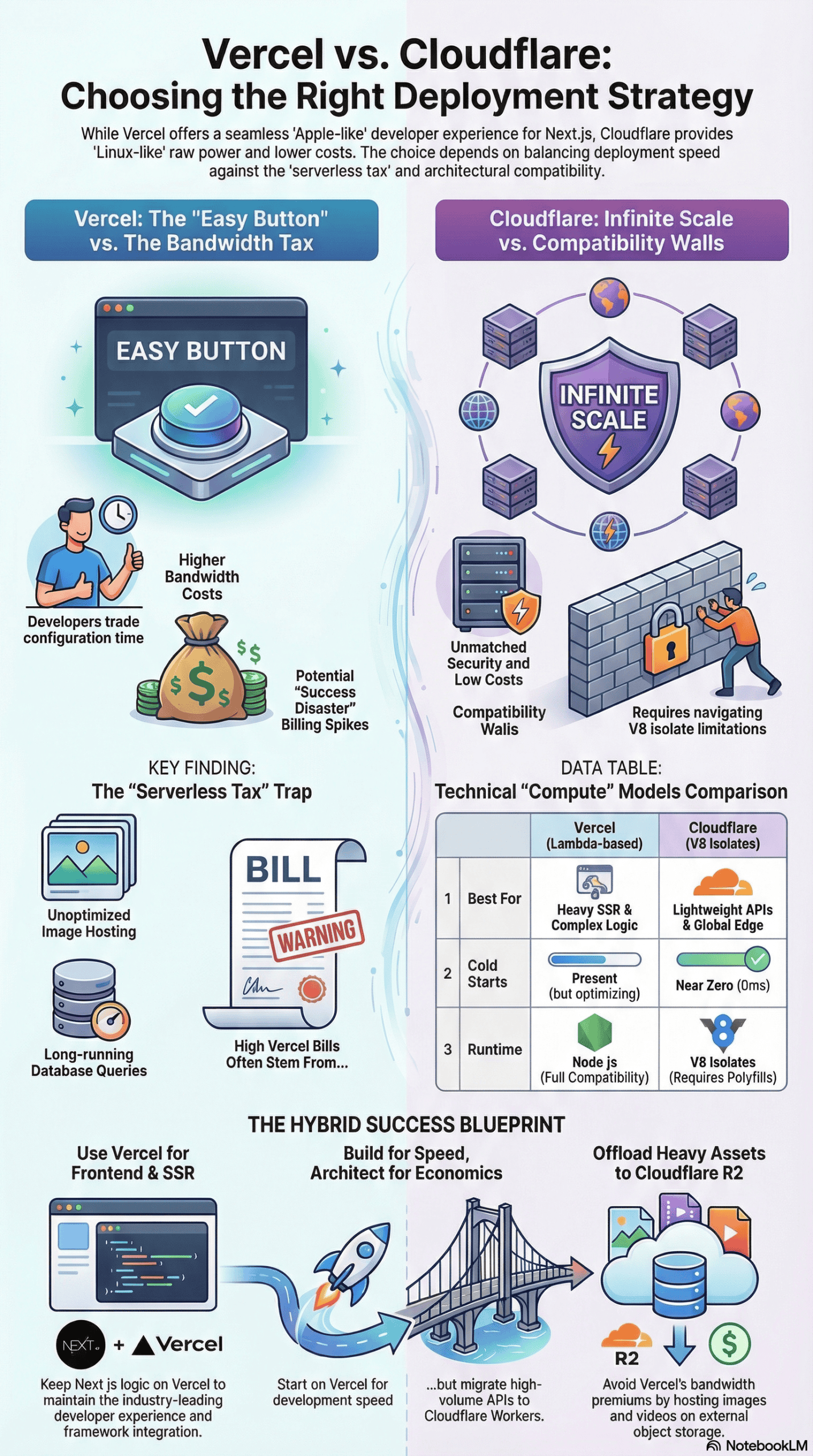

For years, the choice for frontend developers seemed simple: use Vercel for the best developer experience or Cloudflare for raw performance and security. However, the lines have blurred significantly. I’ve observed growing frustration in the developer community, fueled by viral stories of massive serverless bills and heated debates over benchmark rigging.

The Vercel vs. Cloudflare decision isn’t just a preference anymore; it’s about financial risk and architectural survival. Beginners often expect Vercel to be a magic “easy button,” while enterprises view Cloudflare as a cost-saving savior. The reality I’ve found is much messier. The common advice to “just use Next.js on Vercel” is increasingly challenged by those hitting the “serverless tax” wall.

How I Approached This Analysis

My analysis went beyond simple feature lists. I delved into dozens of hours of technical breakdowns, live benchmark tests, and heated discussions from industry leaders like Theo Browne and Mehul Mohan, as well as the engineering teams at both companies. I studied the fallout of viral billing incidents and reviewed the code architectures that caused them.

My goal was to look past the marketing “triangles” and “orange clouds” to understand what truly happens when you deploy real code to these platforms. This analysis brings together experiences from startups, indie hackers, and enterprise engineers, meticulously separating hype from reality.

What People Expect to Happen

When developers choose Vercel, they expect the “Apple experience.” They anticipate zero configuration: they push code to GitHub, and Vercel handles the rest. They expect Next.js to run perfectly because Vercel maintains the framework. The expectation is that they will pay a premium for this ease, but it will be worth it to avoid “DevOps hell.”

Conversely, when developers look at Cloudflare, they expect the “Linux experience.” They anticipate a steeper learning curve but believe they will gain enterprise-grade security, infinite scalability, and significantly lower costs. They expect the “Edge” to make their site faster for everyone, everywhere, instantly.

What Actually Happens in Practice

In practice, the journey is rarely linear.

On Vercel, the initial experience is indeed magical. The “Git-to-deploy” pipeline is seamless. However, as I tracked real-world usage, a pattern emerged: the “success disaster.” A project goes viral or hits a specific inefficiency, and the bill explodes. This isn’t usually due to the compute itself, but often to bandwidth and image optimization costs. I noticed that while Vercel’s fluid compute is making serverless cheaper, their bandwidth pricing remains a significant friction point compared to competitors.

On Cloudflare, the “Edge” promise often hits a compatibility wall. Developers expecting to just lift-and-shift their Node.js apps often realize that Cloudflare Workers run on V8 isolates, not Node. This means standard packages might fail, and debugging becomes a hunt for polyfills. Furthermore, I found that while the Edge is fast for static assets, it can actually be slower for database-heavy applications if your database is centralized (e.g., in US-East) and your Edge worker is in Sydney, causing massive latency on round-trips.

The Most Common Failure Points

Through my analysis of post-mortems and debugging sessions, I identified these specific failure points:

1. The “Serverless Tax” Trap (Vercel)

The most common financial failure on Vercel comes from treating it like a VPS. Storing large assets (videos, high-res images) in the public folder or unoptimized API calls can lead to significant financial surprises. I reviewed cases where a single inefficient database query loop resulted in thousands of dollars in overage because the serverless function “waited” for the database, billing for execution time the entire duration. For more on this, consider this video analysis on serverless costs.

2. The “Isolate” Compatibility Shock (Cloudflare)

On Cloudflare, the failure is often architectural. Developers try to run heavy backend logic or specific Node libraries that simply don’t exist in the Workers runtime. I also observed that Edge computing isn’t a silver bullet. For CPU-intensive tasks like rendering complex pages, Cloudflare Workers can sometimes be 2x to 6x slower than Vercel’s Lambda-based infrastructure if not carefully optimized.

3. The Proxy War Confusion

A structural failure occurs when users try to use both platforms together. I studied the “bizarre and consistently brewing drama” where Vercel and Cloudflare services conflict. Using Cloudflare’s proxy in front of Vercel can break Vercel’s threat detection and geolocation features, leading to suboptimal routing where US traffic is routed through Europe. You can see this detailed breakdown of proxying issues for more information.

What Consistently Works (Across Many Experiences)

Despite the failures, clear patterns of success exist.

- For Vercel: For Next.js frontends, Vercel remains the undisputed king. Its developer experience (DX) is unmatched. Vercel’s new fluid compute model doesn’t charge for “waiting” time (I/O), significantly reducing costs for AI wrappers and API-heavy applications. The smartest teams I analyzed use Vercel for code, but offload heavy assets (images, videos) to cheaper storage like S3, R2, or UploadThing. This bypasses Vercel’s bandwidth premiums.

- For Cloudflare: Cloudflare is unbeatable for serving static files and handling lightweight logic like redirects or authentication checks at the edge. For high-volume, simple API endpoints, Cloudflare Workers offer incredible value. They are often free or cost pennies, whereas Vercel could cost hundreds. If your users are truly global and you can distribute your data using Cloudflare D1 or KV, the performance benefits are real.

What I Would Do Differently If Starting Today

Based on everything I’ve analyzed, here is how I would architect a new project to avoid the common pitfalls:

- Don’t Host Heavy Assets on Vercel: I would immediately set up an external object storage (like Cloudflare R2 or AWS S3) for any user-generated content or large media. Relying on the

publicfolder in Vercel is a ticking time bomb for bandwidth costs. - Use Cloudflare for the “Dumb” Stuff: I would use Cloudflare for DNS, caching, and basic security (WAF). It handles the bulk of the traffic assault for free or cheap.

- Choose Compute Based on “State”:

- If my app requires heavy server-side rendering (SSR) and connects to a centralized database (like Postgres in US-East), I would stick with Vercel (or a traditional VPS) to keep compute close to the data.

- If I am building a highly distributed app or a simple API wrapper, I would choose Cloudflare Workers to take advantage of the 0ms cold starts and low cost.

- Cap My Spend: Regardless of the platform, I would set up spend management limits immediately. The “surprise bill” stories almost always stem from a lack of hard limits. For guidance on setting up cost controls, check official documentation like Vercel’s billing documentation.

Final Takeaway: The Honest Version

The truth is that Vercel is selling you time, while Cloudflare is selling you infrastructure.

Vercel charges a premium to save you hours of configuration. If you are a small team or a solo developer building a Next.js app, Vercel is likely worth the cost, if you understand how to offload your heavy assets.

Cloudflare is selling you the raw power of the internet’s backbone. It is cheaper and often faster for specific tasks, but it demands you understand how the web actually works: from V8 isolates to cache headers.

If I had to summarize the current situation: Build on Vercel for speed of development, but architect for Cloudflare for scale of economics. The moment your bill hits three digits on Vercel, it’s time to look at what parts of your stack belong on the orange cloud.